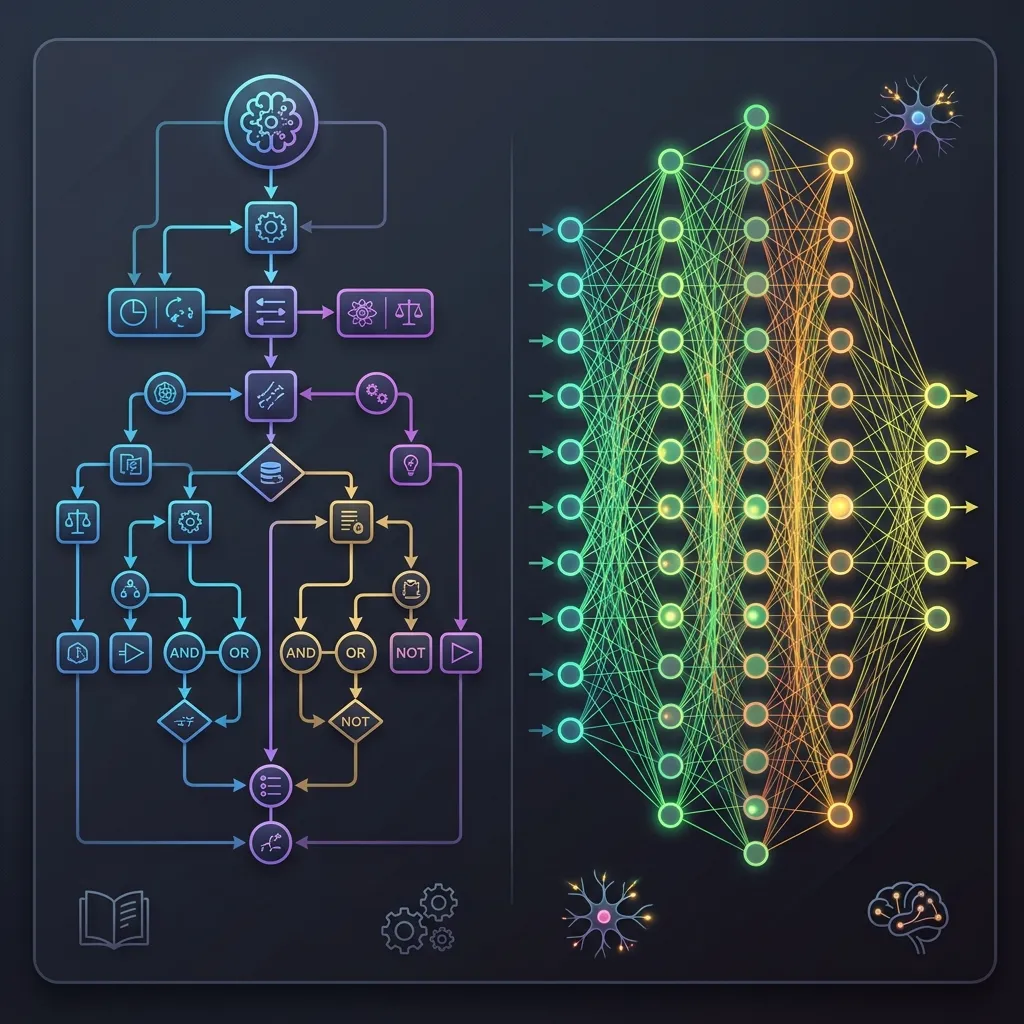

1.1 Symbolism vs. Connectionism

The quest for artificial intelligence has been defined by a fundamental philosophical and technical schism: Symbolism versus Connectionism. This debate is not merely historical; it shapes how we understand the capabilities and limitations of modern foundation models.

Motivation: Why This Matters Today

In the era of massive Foundation Models, understanding this split is not just academic trivia. It provides direct insight into:

- Hallucination and Reliability: Why LLMs can struggle with factual accuracy when statistical pattern learning is not enough on its own.

- Fast Patterning vs. Deliberate Reasoning: Connectionist systems are strong at intuitive perception and pattern completion, while symbolic systems were designed around explicit stepwise reasoning. Bridging that gap remains an active research direction.

The Metaphor: The Recipe Book vs. The Master Chef’s Intuition

Imagine you want to build a system that can cook delicious meals.

- The Symbolist Approach is like a massive Recipe Book. You sit down with world-class chefs and write down every possible rule: “If the steak is 1 inch thick, grill it for 4 minutes on each side.” “If the sauce is too acidic, add a pinch of sugar.” The intelligence lies in the explicit rules and logical combinations.

- The Connectionist Approach is like training a Master Chef from scratch. You don’t give them any recipes. Instead, you let them cook thousands of times, taste the results, and adjust their technique. Over time, they develop an intuitive understanding of how ingredients interact. They can’t explain the exact rules they follow, but they can create masterpiece dishes.

Modern foundation models are the largest and most commercially important expression of this “master chef” intuition.

A Brief History of the Schism

The tension between these two schools of thought has driven the famous “AI Winters” and summers.

- The Dawn (1950s): Symbolism dominated early AI with high hopes for general problem solvers. Simultaneously, Rosenblatt introduced the Perceptron (1958) [2], the ancestor of connectionism.

- The First Winter (1970s): Minsky and Papert published Perceptrons (1969) [3], proving that single-layer networks could not solve non-linear problems like XOR. This event is one of the most famous turning points in AI history. The critique by Minsky and Papert, who were the top authorities at the time, was devastating. It contributed to a long lull in neural network research funding, and connectionism was pushed to the fringes of academia for years.

- The Expert System Era (1980s): Symbolism peaked with expert systems, but hit the “Knowledge Acquisition Bottleneck.” At the same time, Backpropagation was popularized by Rumelhart et al. (1986) [4], reviving connectionism.

- The Deep Learning Revolution (2010s-Present): Massive compute and data allowed connectionism to dominate, leading to the foundation models we use today.

The Symbolist Paradigm: Intelligence as Calculation

Symbolism, often referred to as Classical AI or GOFAI (Good Old-Fashioned AI), posits that intelligence is the manipulation of explicit symbols based on formal rules.

Core Philosophy

- Physical Symbol System Hypothesis: A physical symbol system has the necessary and sufficient means for general intelligent action (Newell & Simon, 1976) [1].

- Representation: Knowledge is represented as facts and rules (e.g.,

If animal has feathers and can fly, then it is a bird). - Mechanism: Inference engines apply logical rules (deduction, induction) to derive new knowledge.

Limitations

Symbolism hit a wall because extracting every rule of human expertise and coding it manually proved impossible for complex, real-world tasks. It failed at perception and handling ambiguity.

The Connectionist Paradigm: Intelligence as Emergence

Connectionism rejects the idea of explicit rules. Instead, it draws inspiration from the biological brain, proposing that intelligence emerges from the interaction of simple, interconnected processing units.

Core Philosophy

- Distributed Representation: Knowledge is not stored in a specific location or rule but is distributed across the weights of connections in a network.

- Learning: The system learns by adjusting connection weights based on data using algorithms like Backpropagation.

- Parallel Processing: Operations occur in parallel across the network.

Comparison: Symbolism vs. Connectionism

| Feature | Symbolism (GOFAI) | Connectionism (Deep Learning) |

|---|---|---|

| Basic Unit | Symbols, Rules, Logic | Neurons, Weights, Continuous Values |

| Learning | Hardcoded by experts (mostly) | Learned from data (Backprop) |

| Interpretability | High (Traceable rules) | Low (Black box matrices) |

| Perception | Poor (Struggles with images/audio) | Excellent (SOTA in vision/speech) |

| Reasoning | Excellent (Strict logic, math) | Poor (Struggles with long-chain logic) |

| Data Requirements | Low (Needs expert knowledge) | High (Needs massive datasets) |

| Handling Noise | Brittle (Fails on unmodeled inputs) | Robust (Graceful degradation) |

The Mathematical Contrast

We can contrast the two paradigms mathematically.

Symbolic Inference often relies on boolean logic and set theory:

Connectionist Inference relies on continuous functions and linear algebra: Where is the weight matrix, is the input vector, is the bias, and is a non-linear activation function.

From Rules to Weights

Let’s see how these look in code. Below is a PyTorch example showing how a Connectionist approach (a simple neural network) learns to solve the XOR problem that Symbolism could easily describe but single-layer Perceptrons failed at.

import torch

import torch.nn as nn

import torch.optim as optim

# The XOR data

X = torch.tensor([[0.0, 0.0], [0.0, 1.0], [1.0, 0.0], [1.0, 1.0]], dtype=torch.float32)

y = torch.tensor([[0.0], [1.0], [1.0], [0.0]], dtype=torch.float32)

# A simple Multi-Layer Perceptron (MLP)

class XORModel(nn.Module):

def __init__(self):

super(XORModel, self).__init__()

self.hidden = nn.Linear(2, 2) # Hidden layer

self.output = nn.Linear(2, 1) # Output layer

self.sigmoid = nn.Sigmoid()

def forward(self, x):

x = self.sigmoid(self.hidden(x))

x = self.sigmoid(self.output(x))

return x

model = XORModel()

criterion = nn.BCELoss()

optimizer = optim.SGD(model.parameters(), lr=0.1)

# Training loop

for epoch in range(10000):

outputs = model(X)

loss = criterion(outputs, y)

optimizer.zero_grad()

loss.backward()

optimizer.step()

if (epoch + 1) % 2000 == 0:

print(f'Epoch [{epoch+1}/10000], Loss: {loss.item():.4f}')

# Test the model

with torch.no_grad():

predicted = model(X)

print(f"Predicted outputs:\n{predicted.round()}")Example: The Connectionist Neuron

Experiment with a single artificial neuron. Adjust the weights and bias to see how the output changes. Can you make it act like an AND gate?

Symbolism vs Connectionism: The AND Gate

Toggle inputs and adjust weights to see how both paradigms solve the AND gate.

Symbolic (Rule-Based)

if A == 1 and B == 1:

return 1

else:

return 0Connectionist (Neural)

AND Gate Goal: Output should be 1 ONLY when both inputs are 1.

✅ Both systems match!

Quizzes

Quiz 1: Why did Symbolism fail at Natural Language Processing (NLP)?

Symbolism relied on rigid grammatical rules and dictionaries. However, human language is highly contextual, ambiguous, and constantly evolving. Rules cannot capture all nuances, idioms, and edge cases. Connectionism succeeds by learning statistical associations from vast amounts of text, capturing context better.

Quiz 2: What was the “XOR Problem” and why was it significant?

The XOR (exclusive OR) problem is a simple classification task where the classes are not linearly separable. Minsky and Papert proved that a single-layer perceptron could not learn the XOR function. Their critique became part of the broader slowdown in neural network research for over a decade (the first AI winter), until multi-layer networks and backpropagation were developed.

Quiz 3: Are modern LLMs purely Connectionist?

While their core architecture (Transformer) is purely connectionist, relying on matrix multiplications and continuous representations, the way we use them sometimes bridges the gap. Prompt engineering, chain-of-thought reasoning, and tool use (like calling a calculator API) introduce symbolic-like operations on top of the connectionist core. The research field of “Neuro-symbolic AI” actively tries to combine the strengths of both (Garcez & Lamb, 2020) [5].

Quiz 4: Discuss the “Knowledge Acquisition Bottleneck” in Symbolist systems.

The Knowledge Acquisition Bottleneck refers to the difficulty of extracting knowledge from human experts and encoding it into explicit rules. Human experts often rely on intuition and tacit knowledge that they cannot easily verbalize. Furthermore, the number of rules required for complex domains grows exponentially, making manual curation intractable.

Quiz 5: How does the concept of “Distributed Representation” in Connectionism differ from Symbolic representation?

In Symbolism, a concept is represented by a specific symbol (e.g., a node in a graph or a specific variable). In Connectionism, a concept is represented by a pattern of activity across many units (neurons). No single neuron represents the concept; it is the combination of weights and activations that stores the information. This allows for graceful degradation and generalization.

Quiz 6: Provide a formal mathematical proof showing that a single-layer perceptron with a step function activation cannot solve the XOR problem.

*Let the inputs be . A single-layer perceptron computes , where is the Heaviside step function and is the threshold. For XOR, we need the following inequalities to hold:

- For :

- For :

- For :

- For : From (2) and (3), we have . Since (1) states , this implies . However, this directly contradicts inequality (4), which requires . Therefore, no such weights and threshold exist, proving that a single-layer perceptron cannot solve XOR.*

References

- Newell, A., & Simon, H. A. (1976). Computer science as empirical inquiry: Symbols and search. Communications of the ACM, 19(3), 113-126.

- Rosenblatt, F. (1958). The perceptron: a probabilistic model for information storage and organization in the brain. Psychological review, 65(6), 386.

- Minsky, M., & Papert, S. (1969). Perceptrons. MIT Press.

- Rumelhart, D. E., Hinton, G. E., & Williams, R. J. (1986). Learning representations by back-propagating errors. Nature, 323(6088), 533-536.

- Garcez, A. D., & Lamb, L. C. (2020). Neurosymbolic AI: The 3rd Wave. arXiv:2012.05876.