9.2 Dataset Quality vs Quantity

This chapter explores one of the most profound paradigm shifts in modern Foundation Model engineering: the transition from Model-Centric and Volume-Centric scaling to Data-Centric curation. While pre-training relies heavily on massive, diverse datasets to build world knowledge, the post-training and alignment phases (Supervised Fine-Tuning, RLHF/DPO) operate under a strictly inverted scaling law. Here, dataset composition and quality strictly dominate raw volume.

The Paradigm Shift: From Big Data to Smart Data

In the early deep learning era, scaling laws dictated that expanding dataset volume inevitably yielded better models. If a model misclassified an edge case, the prevailing engineering reflex was to increase the parameter count or scrape more data.

However, as models scaled into the hundreds of billions of parameters, researchers observed rapid diminishing returns when fine-tuning on unstructured “Dark Data” (noisy, uncurated web scrapes or broad organizational data). While expanding data quantity increases storage and compute costs linearly, it often introduces contradictory gradient signals that stall convergence. Today’s state-of-the-art (SOTA) engineering focuses on Data-Centric AI, where the primary engineering effort is spent identifying and resolving specific noise in the training distribution rather than tuning model hyperparameters.

The Superficial Alignment Hypothesis (LIMA)

The foundational text for the “Quality over Quantity” movement in alignment is the LIMA (Less Is More for Alignment) paper (Zhou et al., 2023) [1]. The researchers fine-tuned a 65B parameter LLaMA model using only 1,000 carefully curated prompts and responses, entirely bypassing Reinforcement Learning from Human Feedback (RLHF). Despite the minuscule dataset, LIMA was competitive with or strictly preferred over GPT-4 in 43% of cases.

This led to the Superficial Alignment Hypothesis:

A model’s core knowledge and reasoning capabilities are learned almost entirely during pre-training. Alignment (SFT) merely teaches the model which sub-distribution of formats (e.g., a helpful assistant persona) it should use when interacting with users.

Because SFT does not inject new knowledge, massive datasets are unnecessary. In fact, large SFT datasets often contain subtle formatting inconsistencies, factual errors, or stylistic regressions that actively degrade the model’s pre-trained capabilities—a phenomenon known as the Alignment Tax.

The Mathematics of Label Noise

To understand why noisy data is so destructive during SFT, consider the standard autoregressive Cross-Entropy loss objective:

When training on high-quality, “gold-standard” data, the target distribution is sharp. The model easily minimizes the Kullback-Leibler (KL) divergence because the mapping from instruction to response is consistent.

If the dataset contains low-quality or contradictory responses (noise), the target distribution becomes high-entropy. The gradient updates begin to conflict. The model wastes its parameter capacity attempting to model the irreducible entropy of the noise, flattening its own predictive distribution . This leads to hallucination and loss spikes, requiring exponential compute to average out the noise.

Automated Data Curation: Filtering the Noise

Manually curating 1,000 perfect examples (like LIMA) is feasible, but scaling high-quality data to 10k or 100k examples requires automation. The AlpaGasus paper (Chen et al., 2023) [2] demonstrated how to achieve this using an LLM-as-a-Judge pipeline.

The researchers took the popular 52k Alpaca dataset—which contained many low-quality, irrelevant, or hallucinated responses—and used GPT-4 to score each pair on a scale of 1 to 5. By discarding anything below a threshold, they distilled the dataset down to 9k high-quality examples. Fine-tuning on this 9k subset not only reduced training time by 83% (from 80 minutes to 14 minutes) but also significantly outperformed the model trained on the full 52k dataset.

Engineering the Data Filter (PyTorch)

In a modern MLOps pipeline, filtering is often done using a dedicated Reward Model (RM) or a Sequence Classification model rather than an expensive API call. Below is a realistic PyTorch implementation using Hugging Face to automatically score and filter an SFT dataset.

import torch

from transformers import AutoModelForSequenceClassification, AutoTokenizer

from typing import List, Dict

def filter_sft_data(

prompts: List[str],

responses: List[str],

model_id: str = "OpenAssistant/reward-model-deberta-v3-large-v2",

threshold: float = 1.5

) -> List[Dict[str, str]]:

"""

Filters SFT instruction-response pairs using a pre-trained Reward Model.

Pairs scoring below the given threshold are discarded to ensure high data quality.

"""

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

# Initialize tokenizer and sequence classification (reward) model

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForSequenceClassification.from_pretrained(model_id).to(device)

model.eval()

filtered_dataset = []

with torch.no_grad():

for prompt, response in zip(prompts, responses):

# Format input as typically expected by the RM

text = f"<|prompter|>{prompt}<|endoftext|><|assistant|>{response}<|endoftext|>"

inputs = tokenizer(

text,

return_tensors="pt",

truncation=True,

max_length=512

).to(device)

# Forward pass to get scalar reward (logit)

outputs = model(**inputs)

score = outputs.logits[0].item()

if score >= threshold:

filtered_dataset.append({

"prompt": prompt,

"response": response,

"score": round(score, 3)

})

return filtered_dataset

# Example Execution

sample_prompts = [

"Explain the concept of backpropagation.",

"Write a python script to reverse a string.",

"asdfasdfasdf" # Intentional noise

]

sample_responses = [

"Backpropagation is an algorithm used to calculate gradients in neural networks by applying the chain rule backwards from the output.",

"print('hello'[::-1])",

"I am an AI assistant." # Low quality response to noise

]

# filtered_data = filter_sft_data(sample_prompts, sample_responses, threshold=1.0)

# Expected output: Only the first two pairs are retained.Synthetic Data & Small Language Models (SLMs)

The ultimate manifestation of “Quality over Quantity” is the use of Synthetic Data. As the internet runs out of high-quality, human-generated text, SOTA models increasingly rely on “Teacher Models” to generate textbook-grade data.

- TinyStories (Eldan & Li, 2023) [3]: Researchers proved that models with fewer than 10 million parameters could generate coherent, grammatically perfect English and demonstrate basic reasoning. The secret? Training exclusively on a synthetic dataset of short stories restricted to the vocabulary of a 4-year-old. By removing the noise and complexity of the open web, the tiny model could focus entirely on syntax and logic.

- Microsoft Phi-3 (Abdin et al., 2024) [4]: The 3.8B parameter Phi-3-mini model rivals the performance of models 10x its size (like Mixtral 8x7B). The innovation was entirely in the dataset: 3.3 trillion tokens of heavily filtered web data and synthetic “textbook” data. Phi-3 proves that high-quality data allows SLMs to punch significantly above their weight class, enabling powerful on-device AI.

The Duplication Threshold

While filtering noise is critical, how we handle the remaining high-quality data is equally nuanced. Recent empirical studies on data duplication reveal a highly non-linear relationship between data repetition and model performance.

- The Sweet Spot (~25% Duplication): Injecting a minimal amount of duplication for highly critical reasoning tasks can actually improve accuracy (acting as an implicit importance weight). It reinforces syntactic structures without causing catastrophic forgetting.

- The Collapse (100% Duplication): If a dataset is heavily duplicated, the model rapidly overfits to the specific entities and phrasing of the training set. Performance on Out-Of-Distribution (OOD) tasks can drop by up to 40%. The model stops learning the underlying reasoning manifold and simply memorizes the duplicated sequence.

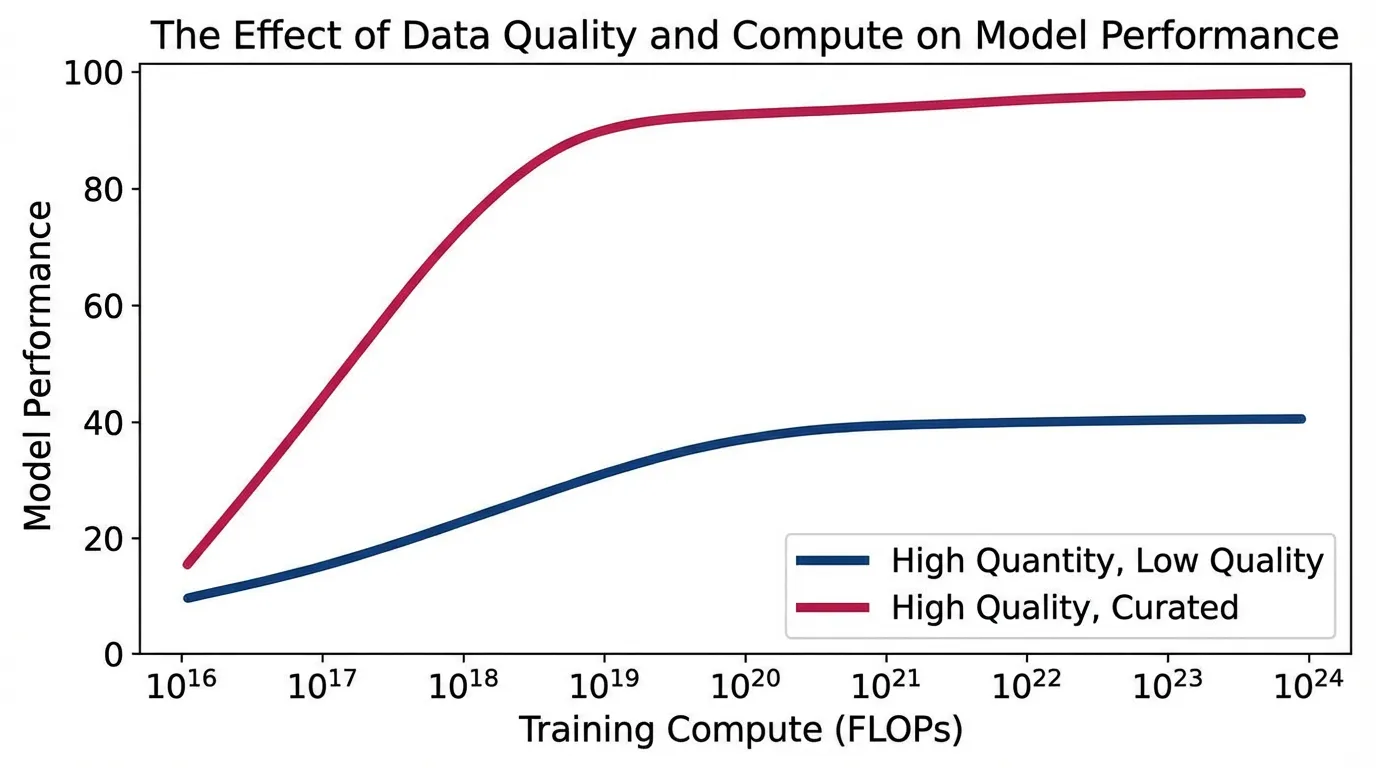

Interactive Visualizer: Quality vs. Quantity Trade-offs

Use the interactive component below to simulate the relationship between Dataset Size, Noise Level, and Duplication Rate. Notice how increasing dataset size yields diminishing returns if the noise level is high, and how extreme duplication causes performance collapse.

Data Quality vs. Quantity Simulator

Adjust the sliders to see how data curation impacts SFT model performance.

Estimated Model Performance (OOD Generalization)

Summary & Next Steps

The transition from volume to curation is the defining characteristic of modern post-training. By heavily filtering datasets, utilizing LLM-as-a-Judge pipelines, and synthesizing textbook-quality examples, engineers can train smaller, faster models that rival legacy behemoths at a fraction of the compute cost.

However, even with perfectly curated datasets, fine-tuning an entire 70B parameter model is computationally prohibitive for most organizations. How do we apply these high-quality datasets to massive models without requiring clusters of H100 GPUs? In the next section, 9.3 Parameter-Efficient Fine-Tuning (PEFT), we will explore mathematical techniques like LoRA and DoRA that allow us to fine-tune massive models by updating only a fraction of a percent of their weights.

Quizzes

Quiz 1: According to the Superficial Alignment Hypothesis, why does alignment require orders of magnitude less data than pre-training?

Because alignment does not inject new factual knowledge or reasoning capabilities into the model. All core knowledge is acquired during the massive pre-training phase. Alignment merely teaches the model the specific format, style, and persona (e.g., helpful assistant) required to surface that latent knowledge.

Quiz 2: Mathematically, why does label noise in an SFT dataset severely degrade model performance?

Label noise increases the entropy of the target distribution . When optimizing the Cross-Entropy loss, the model receives conflicting gradient updates for similar inputs. Instead of converging on a sharp reasoning manifold, the model wastes parameter capacity attempting to model the irreducible noise, flattening its predictive distribution and leading to hallucinations.

Quiz 3: When using an LLM-as-a-Judge (like GPT-4) to filter SFT data, what is a potential systemic risk introduced into the resulting dataset?

The distilled dataset may inherit the specific stylistic biases, verbosity, or “mode collapse” of the judge model. The student model might learn to mimic the judge’s specific tone rather than generating diverse, human-like responses, potentially limiting its creative variance.

Quiz 4: Why does a small amount of data duplication (~25%) sometimes improve accuracy, while 100% duplication catastrophically degrades it?

Minimal duplication acts as an implicit importance weight, reinforcing critical syntactic structures and reasoning paths without overwhelming the training distribution. However, excessive duplication (100%) leads to severe overfitting. The model stops generalizing and instead memorizes the specific duplicated sequences, drastically reducing its performance on Out-Of-Distribution (OOD) tasks.

Quiz 5: Mathematically formulate the Pareto optimal frontier equation defining the elastic trade-off between data volume and label noise for a constant test error .

Using standard neural scaling laws, the test error can be modeled as , where is the volume scaling exponent and is the noise sensitivity exponent. To find the Pareto optimal frontier where an increase in data volume compensates for an increase in label noise for a constant test error , we set the total derivative to zero: . Solving for the elasticity of the trade-off yields the Pareto optimal frontier equation: .

References

- Zhou, C., et al. (2023). LIMA: Less Is More for Alignment. arXiv:2305.11206.

- Chen, L., et al. (2023). AlpaGasus: Training A Better Alpaca with Fewer Data. arXiv:2307.08701.

- Eldan, R., & Li, Y. (2023). TinyStories: How Small Can Language Models Be and Still Speak Coherent English?. arXiv:2305.07759.

- Abdin, M., et al. (2024). Phi-3 Technical Report: A Highly Capable Language Model Locally on Your Phone. arXiv:2404.14219.